The digital marketing landscape has undergone a seismic shift, fundamentally altering the DNA of customer acquisition. We have transitioned from an era of manual bid adjustments and granular keyword sculpting to an epoch dominated by algorithmic opacity and machine learning. Today, AI advertising campaigns are not merely an option; they are the default operating system for platforms like Google, Meta, and TikTok. With approximately 84% of marketers now integrating artificial intelligence into their advertising workflows , one would expect a golden age of efficiency—a time where Return on Ad Spend (ROAS) is maximized, and Customer Acquisition Costs (CPA) are minimized through the ruthless precision of silicon.

Yet, the reality on the ground is starkly different. A paradoxical trend has emerged in the post-2024 marketing ecosystem: as AI adoption skyrockets, so does widespread campaign failure. Small business owners and marketing directors are reporting volatile performance, inexplicable spikes in CPA, and a “black box” frustration where budget is consumed with alarming speed and opaque results. The promise of AI campaigns—that of a self-driving revenue engine—often collapses into a reality of wasted spend and generic, robotic output.

This comprehensive report serves as a forensic analysis of this failure. It is not a critique of the technology itself, but rather an examination of the structural, strategic, and data-centric deficiencies that cause AI tools for marketing campaigns to underperform. More importantly, it provides a rigorous, battle-tested framework for recovery. Drawing on methodologies utilized by elite agencies like Power Reach AI, we will dissect the root causes of failure and provide a 48-hour turnaround plan designed to stabilize hemorrhaging accounts and restore profitability.

The State of AI Advertising: A Crisis of Strategy

To understand why campaigns fail, we must first understand the environment in which they operate. The modern ad platform is a prediction machine. Whether it is Google’s Performance Max (PMax) or Meta’s Advantage+, the core mechanism is identical: the algorithm analyzes billions of signals—user behavior, contextual relevance, historical data—to predict the probability of a conversion.

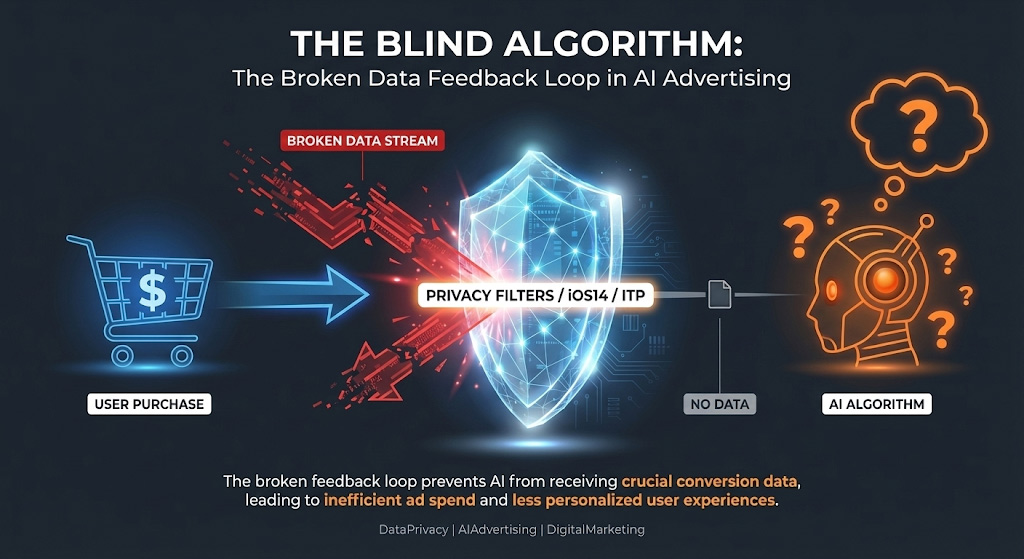

However, prediction is only as good as the signal it receives. We are currently navigating a “Signal Crisis.” Privacy initiatives, browser restrictions (Intelligent Tracking Prevention), and the deprecation of third-party cookies have blinded the very algorithms we rely on. Simultaneously, the ease of AI advertising campaigns has lowered the barrier to entry, flooding the auction with low-quality, AI-generated noise. The result is a perfect storm: blinded algorithms bidding aggressively in a saturated market, often optimizing for the wrong outcomes.

This report will explore five hidden reasons for this underperformance, moving beyond surface-level observations to the mathematical and structural root causes. We will then transition into a practical 30-minute audit and a 48-hour fix, ensuring that your business can leverage the true power of AI—not as a replacement for human strategy, but as its ultimate accelerant.

5 Hidden Reasons AI Advertising Campaigns Underperform

When an AI campaign underperforms, the instinct is often to blame the creative or the budget. However, deep audits of thousands of ad accounts reveal that these are rarely the primary culprits. The true failures are systemic—embedded in how the AI is fed, guided, and interpreted.

1. The Data Feedback Loop is Broken (The “Garbage In, Garbage Out” Crisis)

The most critical point of failure in modern AI advertising campaigns is data integrity. AI bidding strategies—specifically Target CPA (tCPA) and Target ROAS (tROAS)—are deterministic models. They do not “think” in the human sense; they calculate probabilities based on historical success. If the data feeding these calculations is corrupted, incomplete, or delayed, the AI builds a predictive model based on hallucinations.

The Invisible Conversion and the Privacy Gap

The first dimension of this failure is signal loss. Since the introduction of iOS 14.5 and the subsequent tightening of privacy standards by browsers like Safari and Firefox, client-side tracking (pixels) has become notoriously unreliable. Research indicates that ad platforms often report a “version of reality” rather than reality itself. A user may click an ad, browse the site, and purchase, but if their browser blocks the conversion pixel or strips the tracking parameters, the ad platform registers this as a non-event .

This creates a disastrous feedback loop. The AI “thinks” the ad failed. It looks at the user profile of the person who bought (but wasn’t tracked) and decides not to bid on similar users in the future. In reality, that user might have been the perfect customer. By failing to track the success, the AI optimizes away from your most profitable audience segments. A campaign might show a $150 CPA on the dashboard, leading a marketer to pause it, when in reality, invisible conversions mean the true CPA is $105, making it the top performer .

The Phantom Conversion and the Bot Economy

Conversely, and perhaps more dangerously, AI campaigns are susceptible to “phantom conversions.” This occurs when the AI optimizes for “soft” signals that are easily forged by bots.

Sophisticated click farms and automated scripts constantly scour the web, interacting with ads to mask their true purpose or to artificially inflate publisher revenue. These bots can fire page view events, “Add to Cart” events, and even fill out lead forms with junk data. If an AI advertising campaign is optimizing for “Traffic” or “Leads” without a strict quality filter, it will interpret this bot activity as success .

The algorithm detects a pattern: “Users with Profile X (bots) convert at a high rate.” It then aggressively shifts budget to find more users with Profile X. This leads to the “Death Spiral of Low Quality,” where a campaign reports fantastic vanity metrics—high click-through rates (CTR), low cost-per-lead (CPL)—but generates zero revenue.

Table 1: The Data Quality Spectrum and AI Interpretation

| Data State | AI Interpretation | Strategic Consequence |

| High Fidelity (Clean) | “This specific user behavior leads to revenue.” | Budget flows to high-LTV, legitimate prospects. |

| Signal Loss (ITP/Blockers) | “This ad spend resulted in zero action.” | AI abandons profitable demographics; scale is artificially capped. |

| Polluted (Bot Traffic) | “This behavior (bot click) is a conversion.” | AI optimizes for fraud; the budget is incinerated on non-human traffic. |

| Delayed (Attribution Lag) | “Immediate conversion did not occur.” | AI under-bids on high-ticket, long-consideration cycles. |

2. The “Set It and Forget It” Fallacy and Algorithmic Drift

A prevailing myth, often perpetuated by the platforms themselves, is that AI tools for marketing campaigns are autonomous agents requiring zero supervision. The narrative of “set it and forget it” is a primary driver of campaign stagnation . While AI excels at real-time execution—adjusting bids in milliseconds based on auction dynamics—it lacks strategic context and foresight.

The Phenomenon of Algorithmic Drift

Without human guardrails, AI models are prone to drift. An algorithm is designed to find the path of least resistance to the goal. If the goal is “Conversions” and the constraint is “CPA < $50,” the AI will find the easiest conversions first.

Often, the easiest conversions are existing customers or users who were already searching for the brand. In a Performance Max campaign, the AI might discover that retargeting past purchasers on YouTube is the cheapest way to get a “win.” It will then aggressively steer budget toward these warm audiences, inflating the reported ROAS. The dashboard looks green, but the campaign is failing to drive incremental lift—it is simply harvesting demand that arguably would have converted anyway, rather than creating new demand.

Inability to Detect Fatigue

Furthermore, AI struggles to identify ad fatigue in the nuanced way a human strategist can. A human marketer understands that showing the same creative to a user ten times in a week creates negative brand sentiment. The AI, looking only at the math, might see that the 10th impression still has a marginal probability of conversion and keep spending. This leads to a precipitous drop in performance—a “cliff”—rather than a gradual decline, as the audience collectively tunes out the repetitive messaging .

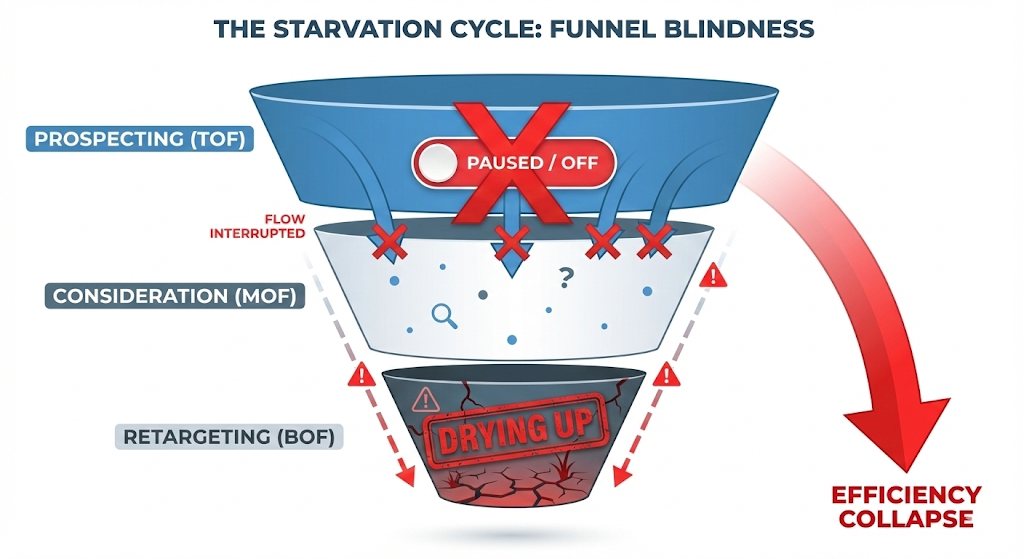

3. Funnel Blindness: The Assassination of Prospecting

One of the most counterintuitive reasons for an advertising campaign failure is the mismanagement of the marketing funnel. In a bid to lower blended CPA, marketers often pause Top-of-Funnel (TOF) ads because they appear expensive on a Last-Click attribution basis.

The Symbiosis of High and Low CPA

Data analysis of high-performing Meta ad accounts reveals a symbiotic relationship between expensive prospecting ads and cheap retargeting ads. “High-CPA” ads are often the prospectors—they are doing the heavy lifting of introducing the brand to cold audiences. They naturally have a lower conversion rate and higher cost.

“Low-CPA” ads are the harvesters—they retarget users who have already been warmed up.

When a marketer pauses the “expensive” prospecting ads to save money, they cut off the fuel supply to the retargeting pool. For a week or two, performance might look efficient as the AI harvests the remaining users in the funnel. But soon, the retargeting audience dries up, and the entire campaign efficiency collapses. The failure here is not the AI; it is the human operator judging a “Hunter” by the metrics of a “Farmer” .

Table 2: The Funnel Ecosystem & AI Metrics

| Funnel Stage | AI Role | Typical Metric Profile | Strategic Value |

| Prospecting (TOF) | Explore new audiences, test hooks. | High CPA, Low ROAS, High Reach. | Feeds the pixel with new data; prevents audience decay. |

| Consideration (MOF) | Educate and differentiate. | Moderate CPA, Avg ROAS. | Builds trust; filters qualified leads from curious clickers. |

| Retargeting (BOF) | Close the sale. | Low CPA, High ROAS, High Freq. | Captures revenue; maximizes LTV. |

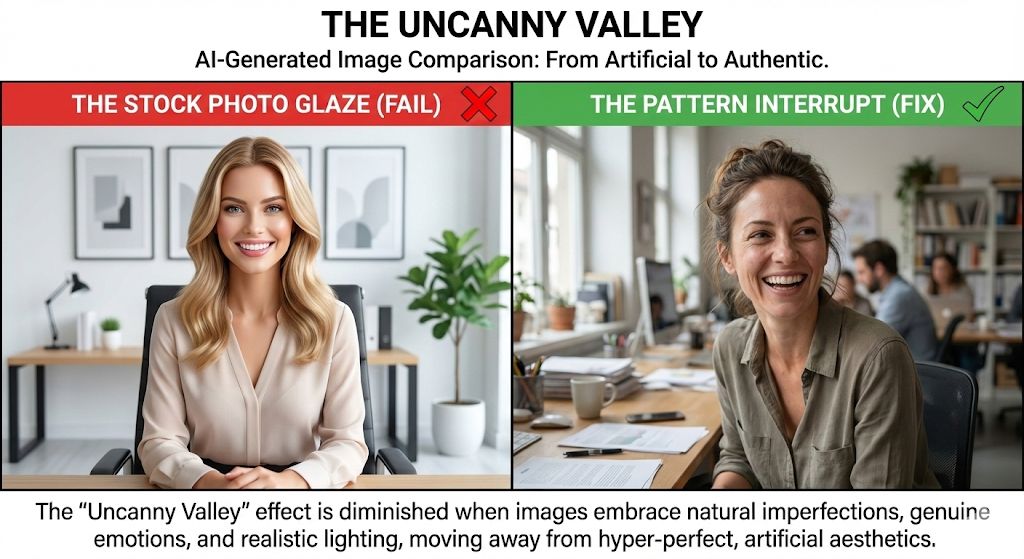

4. Creative Homogeneity: The “Robotic” Uncanny Valley

As generative AI tools for marketing campaigns (like ChatGPT, Midjourney, and Jasper) become ubiquitous, a new failure mode has emerged: Creative Homogeneity. Algorithms trained on Large Language Models (LLMs) tend to converge on “average” outputs—statistically probable sequences of words that are technically correct but emotionally inert.

The “Delve” and “Unlock” Problem

AI-generated copy frequently lacks psychological nuance. It struggles to create genuine urgency or craft compelling hooks that grab attention from the first line. Instead, it relies on patterns it has seen millions of times. Words like “delve,” “unlock,” “unleash,” “elevate,” and phrases like “In the ever-evolving landscape” are hallmarks of AI writing.

Consumers have developed a subconscious filter for this “corporate robotic” tone. When every competitor in a niche uses the same LLM to write their ads, differentiation evaporates. The ads blend into a beige wall of sameness. The AI might be optimizing the delivery perfectly, but the content is failing to trigger the dopamine response required to stop the scroll.

Visual Hallucinations and Stock-Photo Fatigue

Similarly, AI-generated imagery can suffer from the “uncanny valley” effect—where humans look slightly artificial, lighting is inconsistent, or text is rendered as gibberish (hallucinations). Even when the image is perfect, it often resembles a high-quality stock photo because that is what the model was trained on. In a social media environment that rewards authenticity and “raw” content, polished AI images can signal “ad” too quickly, causing users to scroll past before the message is received .

5. Contextual Misalignment and Intent Mismatch

The final hidden reason for failure lies in the nuances of context. AI targeting mechanisms, particularly in Google’s “Broad Match” or PMax, can misinterpret user intent.

The Keyword vs. Intent Gap

An AI might match the keyword “best CRM for small business” with a search query for “free CRM templates excel.” Semantically, these are related. Mathematically, they are close vectors. However, the commercial intent is vastly different. The first user is looking to buy software; the second is looking for a free spreadsheet. The AI sees a “match” and spends the budget.

Unless the campaign structure forces the AI to distinguish between these intents (via negative keywords or audience signals), it will waste significant budget on “curiosity clicks” that have zero probability of becoming revenue .

Brand Safety and Placement Hallucinations

In automated display networks, this contextual blindness can lead to brand safety disasters. An AI might place a premium brand’s advertisement on a site that—while mathematically relevant to the keyword—is tonally disastrous (e.g., placing a luxury travel ad on a conspiracy theory blog because the keyword overlap exists). This not only wastes money but damages brand equity .

The 30-Minute Audit: Diagnosing the Hemorrhage

Before implementing any fixes, a rapid diagnostic audit is required. This is not a deep, month-long consulting project; it is a tactical triage designed to be completed in under 30 minutes using standard ad platform dashboards (Google Ads Manager, Meta Business Suite) and Google Analytics 4 (GA4). This framework mirrors the initial “health check” performed by Power Reach AI during their comprehensive client onboardings.

Step 1: The Data Integrity Check (Time: 5 Minutes)

Goal: Determine if the AI is flying blind or hallucinating success.

- Pixel Firing Verification:

- Action: Install the “Meta Pixel Helper” or “Google Tag Assistant” Chrome extension.

- Test: Navigate to your conversion page (e.g., the “Thank You” page after a purchase).

- Check: Does the Purchase or Lead event fire? Does it fire only once?

- Red Flag: If the pixel fires twice (duplication), your ROAS is artificially doubled in the reports. If it doesn’t fire, the AI is blind .

- Match Quality Score Analysis:

- Action: Go to Meta Events Manager > Data Sources > Select Pixel. Look at the “Event Match Quality” score for your Purchase/Lead event.

- Benchmark: A score below 6.0/10 indicates severe signal loss. The AI is not receiving enough data to identify who your customers are. This indicates an urgent need for Conversions API (CAPI) implementation .

- The “Reality Check” Discrepancy:

- Action: Open your backend (Shopify/WooCommerce/CRM) and check total sales for the last 30 days. Open Ads Manager and check reported sales.

- Benchmark: A discrepancy of 10-15% is normal due to attribution windows. A discrepancy of >20% suggests a broken data loop or heavy bot traffic .

Step 2: The Funnel & Frequency Scan (Time: 10 Minutes)

Goal: Identify if you are burning out your audience or starving your funnel.

- Frequency Analysis:

- Action: In Meta Ads Manager, customize columns to show “Frequency.” Sort ads by “Amount Spent” (High to Low).

- Analysis: Look at your top spenders.

- Red Flag: High spend on ads with Frequency > 4.0 (over 7 days). You are pummeling the same people. The creative is fatigued.

- Red Flag: High CPA on ads with Frequency < 1.2. You are killing potential winners (prospecting ads) before they have a chance to optimize. You are guilty of “Funnel Blindness” .

- Asset Group Segmentation (Google PMax):

- Action: Open your Performance Max campaign > Asset Groups.

- Analysis: Are you running a single Asset Group for all products?

- Red Flag: Lumping everything together prevents the AI from customizing the creative to the audience. You need to segment by product category (e.g., “Men’s Shoes” vs. “Women’s Accessories”) to give the AI proper creative signals.

Step 3: The Creative & Copy “Turing Test” (Time: 10 Minutes)

Goal: Detect robotic, low-converting AI content.

- The “Delve” Test:

- Action: Read the copy of your top 5 performing ads.

- Check: Do they contain the words “Unlock,” “Elevate,” “Discover,” “Delve,” or phrases like “In today’s fast-paced world”?

- Red Flag: These are generic AI-isms. They signal to the user that “this is an ad” and “nobody wrote this.” This lack of emotional hook kills conversion rates.

- Visual Artifact Check:

- Action: Zoom in on your AI-generated images. Look at hands, text on background objects, and lighting shadows.

- Red Flag: Any physical impossibility breaks immersion. Also, check for the “Stock Photo Glaze”—perfect lighting, generic smiles. If it looks like a stock photo, it performs like a stock photo (poorly).

Step 4: Budget & Bidding Review (Time: 5 Minutes)

Goal: Ensure financial constraints aren’t choking the AI.

- Budget-to-Bid Ratio:

- Action: Check your Target CPA (tCPA) setting versus your Daily Budget.

- Benchmark: Your daily budget should be at least 5x to 10x your target CPA.

- Reasoning: If your tCPA is $50 and your budget is $100, the AI can only “afford” to buy 2 conversions a day. This is statistically insignificant for machine learning. The AI needs room to fail in order to learn. If the ratio is too tight (e.g., 1x or 2x), the campaign will never exit the learning phase .

- “Limited by Budget” Status:

- Action: Check Google Ads status column.

- Red Flag: If “Limited by Budget” appears, the AI sees opportunity but is capped. It may start buying cheaper, lower-quality inventory just to spend the budget, rather than buying the right inventory.

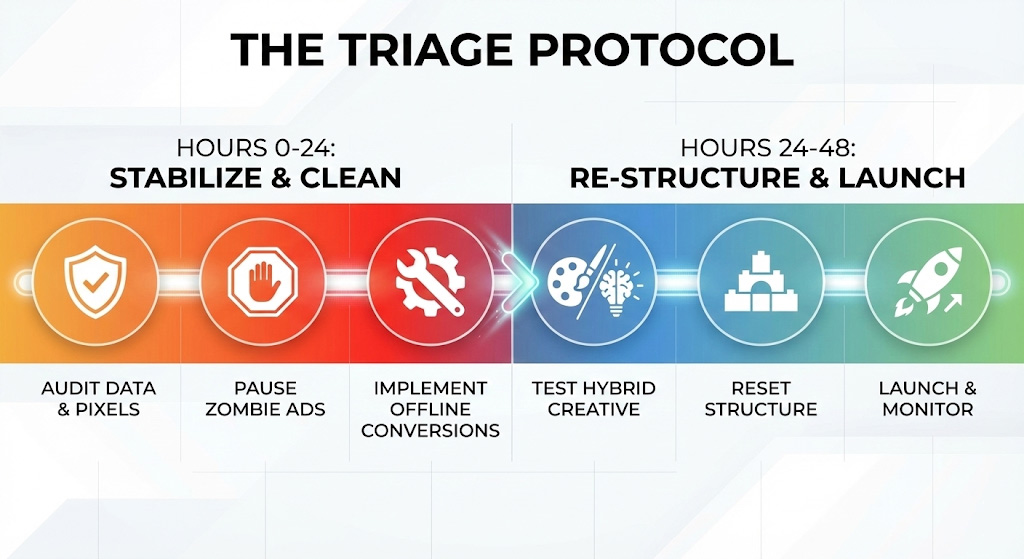

The 48-Hour Turnaround Plan

Once the audit is complete, immediate action is required. This is not a gradual optimization; it is a structural reset. The goal is to stabilize performance without triggering the dreaded “learning phase reset” that sends performance into a week-long tailspin.

Phase 1: Stabilization & Data Cleaning (Hours 0-12)

Objective: Stop the bleeding and re-establish the “ground truth” for the AI.

- Pause the “Zombies” (Hour 1):

- Identify ads with high spend and zero conversions over the last 30 days. Pause them immediately.

- Crucial Caveat: Do not pause high-CPA ads that have low frequency (prospecting ads) unless their CPA is >3x the target. These are your “feeders” .

- Implement Offline Conversion Import (OCI) (Hours 2-6):

- This is the most powerful fix for the “Invisible Conversion” problem.

- Step A: Export your customer list from your CRM/Shopify for the last 90 days (include Email, Phone, First Name, Last Name, Order Value, Order ID).

- Step B: Format this CSV according to Google Ads (Offline Conversions) and Meta (Offline Events) templates.

- Step C: Upload. This tells the AI: “Ignore the pixel issues; these are the humans who actually gave us money.” It forces the algorithm to re-calibrate based on hard revenue data, not flaky browser signals .

- Exclusion Protocols (Hours 6-12):

- Brand Exclusions: For PMax, request a “Brand Exclusion List” to stop the AI from bidding on your own brand name (unless that is a specific strategy). This forces the AI to hunt for new customers rather than cannibalizing organic traffic.

- Placement Cleaning: In Google Display/PMax settings, exclude “Mobile Apps” (often high accidental clicks/bots) and “Unknown” placements.

Phase 2: Creative Rapid Prototyping (Hours 12-24)

Objective: Replace generic AI copy with psychologically driven, “Hybrid” creative.

We will not abandon AI; we will upgrade the operator. We will use specific Prompt Engineering Frameworks to generate a copy that passes the Turing Test.

Action: The Copy Rewrite using the PAS Framework

Instead of asking AI to “write an ad,” use this specific prompt structure to generate “Human-AI Hybrid” copy:

The “Anti-Robot” Prompt:

“Act as a direct response copywriter for a [Product Category]. Write 3 Facebook ad variations using the PAS (Problem-Agitate-Solution) framework.

- Target Audience: [Insert Persona].

- Tone: Empathetic, gritty, conversational. Avoid all corporate jargon. Do NOT use words like ‘unlock,’ ‘elevate,’ or ‘delve.’

- Constraint: The ‘Problem’ section must focus on the specific pain point of [Insert Pain Point]. The ‘Solution’ must mention our 4.8-star rating.

- Hook: Start with a question that the user is asking themselves right now.”

Action: The Visual Refresh using COAST

If generating new images, use the COAST framework (Context, Objective, Action, Scenario, Task) to ensure the AI creates a scene, not a stock photo.

- Fix: Specify lighting (“cinematic,” “golden hour”), camera angle (“shot from above,” “macro lens”), and use Negative Prompts (e.g., “no text,” “no distorted hands,” “no blurry background”) to clean the output.

Phase 3: Structural Reset & Re-Launch (Hours 24-36)

Objective: Re-structure the campaign architecture to leverage AI Max capabilities correctly.

- Consolidate Signal (The “Data Density” Fix):

- If you have 10 ad sets with $10/day budgets, you are starving the AI. Consolidate them into 2-3 broad ad sets (e.g., “Broad,” “Lookalike 1%,” “Interest Stack”).

- AI requires data density to learn. Consolidating budget allows the AI to see patterns faster.

- Enable “AI Max” / Broad Match Modifiers:

- Search: Switch high-intent keywords to Broad Match, but only if you layer them with “Audience Signals” (your customer list uploaded in Phase 1). This tells Google: “Go broad, but prioritize people who look like this list.”

- Social: Use “Advantage+ Audience” but set “Engaged Shoppers” as a behavioral filter to prevent the AI from drifting into low-quality audiences.

- The “Sandbox” Method:

- Create a separate campaign (10-20% of budget) dedicated solely to testing. This is your R&D lab. Test wild angles, new AI images, and different offers here.

- Why: This protects your main campaign’s historical data. If a test fails in the Sandbox, it doesn’t hurt the Quality Score of your main revenue driver.

Phase 4: Monitoring & Calibration (Hours 36-48)

Objective: Watch for volatility and adjust.

- The 24-Hour “Hands Off” Rule:

- Do not touch the ads for 24 hours after launch. The dashboard numbers will fluctuate wildly as the AI explores new paths. This is normal. Touching it resets the learning.

- Frequency Check (The Safety Valve):

- Check the new prospecting ads. Are they reaching new people (Frequency ~1.0-1.2)? If Frequency jumps to 2.0+ immediately, the audience is too small. Broaden the targeting.

- The Bot Filter Check:

- Check Google Analytics. If a new ad brings traffic with a 100% bounce rate and 0:00 time-on-site, kill it immediately. The AI has found a “bot pocket” and is optimizing for cheap clicks .

What the Best AI Campaigns Get Right

Analyzing successful case studies reveals distinct patterns that separate high-performing ai advertising campaigns from failures. Leading brands like ClickUp, Royal Canin, and Klook—as well as clients of Power Reach AI—share a common architectural philosophy.

1. The Shift from Keywords to Intent (The “AI Max” Strategy)

Top performers have abandoned the obsession with “Exact Match” keywords. They understand that AI has evolved to understand semantic intent.

- Case Study: Royal Canin, a pet nutrition brand, utilized AI search-term matching to capture long-tail queries like “how many times a day to feed a puppy.” A traditional keyword strategy might have missed this purely informational query. However, the AI understood the intent implies a high-value potential customer (a new puppy owner). By dynamically tailoring ad copy to answer this specific question, they increased conversions by 263% while lowering CPA by 73% .

- Insight: The win wasn’t the keyword; it was the context. The best campaigns trust the AI to find the meaning behind the search, not just the string of text.

2. The Hybrid Creative Workflow (Strategy + Scale)

The best agencies utilize a “Human-Strategy, AI-Execution” model. They do not let AI drive the car; they let it build the road.

- Ideation (Human): Strategists define the emotional angle (e.g., “The anxiety of a new business owner”).

- Generation (AI): AI tools are used to generate 20+ variations of hooks and headlines based on that angle .

- Curation (Human): Humans select the best 3 variants and refine the tone to ensure empathy and brand voice alignment.

- Result: Research shows this hybrid approach yields ~2.3x higher CTR than pure AI-generated content and significantly better conversion rates than human-only content due to the sheer volume of testing allowed .

3. First-Party Data Sovereignty (The “Data Fortress”)

Successful AI campaigns do not rely solely on platform data. They built a “Data Fortress.” They actively collect first-party data (emails, phone numbers) via lead magnets and quizzes, and they feed this data back into the ad platforms via CAPI.

- Strategic Advantage: This creates a “truth signal” that allows the AI to optimize for business revenue (e.g., “High LTV Customer”) rather than just platform metrics (e.g., “Link Click”). It bypasses the privacy filters of iOS and browsers, giving the AI a clear view of success .

Table 3: The Evolution of Ad Strategy

| Feature | The “Old Way” (Failing) | The “AI-Native” Way (Winning) |

| Targeting | Granular, manual demographics (Age, Zip). | Broad targeting with AI Audience Signals. |

| Bidding | Manual CPC / Max Clicks. | Value-Based Bidding (tROAS) with Offline Data. |

| Creative | 1-2 static ads per month. | Dynamic Creative Optimization (DCO) with 10+ variants. |

| Keywords | Exact Match obsession. | Broad Match + Negative Keywords + Intent Matching. |

| Measurement | Last-Click Pixel attribution. | Data-Driven Attribution + Media Mix Modeling (MMM). |

Common Mistakes When Scaling

Once the 48-hour fix stabilizes the campaign, the temptation is to aggressively increase the budget. This is where the second wave of failure often occurs. Scaling an AI advertising campaign is a mathematical operation, not just a financial one.

1. Scaling Too Fast (Breaking the Algorithm’s Prediction Model)

AI algorithms operate on historical trend lines. If you have been spending $100/day, the AI predicts outcomes based on that volume. If you suddenly scale to $500/day, the prediction model breaks. The AI enters a “re-learning phase” because its previous probabilities are no longer valid for the new volume required.

- The Fix: Scale budgets by 10-20% every 48-72 hours. This “stair-step” approach allows the AI to find new inventory incrementally without resetting its learning history .

2. Ignoring “Brand Cannibalization”

As PMax or Advantage+ campaigns scale, they often begin to over-bid on your own brand name because it converts easily and cheaply. You might see ROAS skyrocket to 1000%, but this is an illusion. You are simply paying for traffic that was already searching for you organically.

- The Fix: Monitor the “Brand vs. Generic” split. If brand spend exceeds 15-20% of a non-brand campaign, apply strict negative keyword lists to force the AI to hunt for new customers.

3. Ethical Oversights and the “Creepy” Factor

AI allows for hyper-personalization, but scaling this can lead to consumer backlash. There is a fine line between “relevant” and “creepy.” Over-personalization (e.g., “We saw you looking at those red shoes 5 minutes ago”) can trigger negative sentiment and privacy concerns.

- The Fix: Maintain strict ethical guidelines on data usage. Ensure all first-party data used for training is consent-based and compliant with GDPR/CCPA regulations. Use personalization to add value (e.g., “Here is a guide on how to use those shoes”) rather than just to stalk.

4. Platform Monogamy (Single Point of Failure)

Relying solely on one platform (e.g., only Meta) creates a single point of failure. AI advertising campaigns perform best when they have cross-channel signals. A user might see a brand on TikTok (Awareness), search for it on Google (Intent), and buy.

- The Fix: Diversify. A robust strategy might use Google Search to capture demand and Meta/TikTok to generate demand. Agencies like Power Reach AI specialize in integrating these channels so the AI on one platform informs the strategy on another, creating a holistic ecosystem.

Conclusion: From “Black Box” to “Glass Box”

The narrative that AI will “replace” marketers is fundamentally flawed. AI advertising campaigns do not fail because the technology is inadequate; they fail because they are often deployed as a substitute for strategy rather than an accelerant of it. The campaigns that win in the coming years will be those that treat AI as a powerful engine that requires high-octane fuel (clean data) and a skilled driver (human strategy).

The “Black Box” of AI marketing—where money goes in and results remain a mystery—can be transformed into a “Glass Box.” By ensuring data integrity, respecting the funnel, demanding creative excellence, and auditing regularly, businesses can turn volatility into predictability.

Key Takeaways for Immediate Action:

- Data is Destiny: Fix your pixel, CAPI, and offline data loops before spending another dollar.

- Creative is the New Targeting: Use AI to generate volume, but use human insight to ensure quality and emotional resonance.

- Respect the Funnel: Don’t starve your prospecting to feed your retargeting.

- Audit Regularly: The digital landscape shifts daily; your audit process must be continuous.

Need a Professional Second Opinion?

For businesses seeking to implement these changes without the operational burden, Power Reach AI offers the specialized infrastructure required to handle this transition.

- powerreach.ai: Verify your digital foundation before scaling ad spend.

- Deploy AI Agents: Automate lead validation and appointment setting to ensure you never miss a conversion.

The future of advertising is not about AI versus Humans. It is about AI-Augmented Humans outperforming everyone else. The tools are ready. The question is: is your strategy?

Disclaimer: The strategies outlined in this report are based on current best practices as of late 2025. Algorithms update frequently; continuous testing is required.